Check out the current public test version here!

Brahma is a 3D game engine with a rather retrofuturistic design, intended for small studios and solo developers. It's being written from scratch in C++ using standard Windows API and no third-party libraries. This technology introduces an entirely new class of low-latency real-time engines that make special timing requirements, treating frames as video fields with a target time budget of 2-4 ms each, down from 16-33 ms frame budgets normally seen in game engines. It evolves in a different way than other modern engines, rejecting conventional BSP, Z-buffer, floating-point coordinates, and most of the lame screen-space effects in favor of innovative and efficient techniques. The engine is non-Euclidean capable to some degree; also it supports true displacement mapping for sectors as a means to virtualize geometry that affects collisions. The engine is also carefully designed to be easy and convenient to develop for, yet versatile and adaptive to any needs.

Highlights

- Lightweight, instant warmup, loading any content in background

- No Z-buffer, G-buffer etc.; no near/far clip plane required

- A custom software-based rasterization pipeline not governed by triangles

- Correctly ordered transparency without compromise

- Hierarchical frame buffer for translucent reflections and other effects

- No screen-space mockery of what should be done within the scene

- An intergrated working environment that unifies the edit tools with game logic

- A plethora of custom, compact and well-standardized formats

- A hybrid bitmap/audio format with unique features

- Optimized for stable and stutter-free high framerates (in the 120+ fps range)

- Favors simple yet memory-efficient representations like indexed color or shaped texels

- Resorts to fixed-point arithmetic and LUT-based functions instead of floats

- Built-in color correction and support for HDR shading even in indexed color

- A full blown post-processing step that models physical camera imperfections

- A physical sky system with actual stars drawn as points

- Heightmapped surfaces without tesselation, affecting scene collisions

- Precise timing of game events and user inputs; no global game tics

- A fault-tolerant design with industrial and CAD applications in mind

My background

I've always dreamed of making computer games powered by my own 3D engine. I've been learning computer programming since 1999, coding various things that were inspired by my favorite games. The digital world was my escape from the gloomy post-USSR life. I was in Windows and MS-DOS environments and got used to them. My first programming language was Visual Basic 4 (I quickly moved to VB6 that was mainstream at the time). Although my first attempts to create an engine were pointless garbage, some of my results were promising, and I remember coming up with my own vector graphics format and a simple editor back in 2001. I loved drawing and 3D modeling as a kid, and enjoyed making short animations in early 3D Studio MAX versions. I also enjoyed using ActionScript in Macromedia Flash 4, but dropped it since I was passionate about programming all graphics by hand. It was fascinating to allocate a byte array and send it to the screen. I'm also into music and sound, and have used a lot of sound editors since Cool Edit '95.

Things have changed a lot in 2006 when I moved my development to Visual C++. I was a fan of the classic Build engine games such as Duke Nukem 3D and Shadow Warrior, and used to code some crazy things with EDuke32 source port. Since I had some great ideas for my own games, I was striving for independent game development and chosen to use my own technology started in 2013. The first stone of the 3D part was laid in late 2017 when I tried to replicate the sector-based approach that was used by Build engine.

Nobody has recognized my dedication back in the day. My father told me it's impossible to achieve any success by working with personal passion and intuition, meaning that one should obey and do what everyone else does. But I can't buy it. I have to pave the way on my own despite my disability and life's challenges. I studied in a technical college once, but dropped out in 2012 to devote more time for hobby projects and self-education. At the same time I felt the urge to learn about artistic, music and design related topics, being kind of an artist/musician who also writes software for his purposes.

Philosophy of this engine

The reason why I've chosen to make a new engine is that I adhere to a different philosophy than one prevalent in game industry, giving the engineering part both an aesthetic and pragmatic value, looking for elegant ways to achieve more with simple means, appreciating diversity and uniqueness. Beside that, I wish to learn how games are created from basic algorithmic structures, go to the basics and experiment with various approaches, in order to discover gems that were overlooked by other developers. While doing this, I have strong intuitions and views on what directions to prioritize. I believe that having a stable high framerate, a low input lag, and never seeing any loading screens is more important for delivering a great and immersive gaming experience than use of complex shaders and high polygon count, for instance. Also, I'm convinced that mesh support shouldn't be limited to flat triangles and the collision model should normally stay on par with the visuals, avoiding any simplified proxy models for handling collisions in order to implement an authentic "what you see is what you interact with" approach. As a sidenote, if a small indie game is built with the use of a modern tech, it tends to be overly bloated and typically feels kind of fake and generic, as the oldschool look and feel is only imitated, not being such "under the hood". My engine is an example of an integrated toolset that has built-in facilities to automatize content generation and management, making games take up less space, load faster and be easier to create at the same time.

Development notes

Although it's deemed to be a massively-parallel task (exploited by dedicated graphics hardware), the entire process of rendering of a 3D scene is rather serial by its nature. Unless you are using a culling technique like Z-buffering, you can't draw an object into the frame buffer if you have to draw anything behind it first. So, although a simple convex set of polygons can be filled in any order, the order one has to draw a complex scene is determined by its depth relations. As for the general hidden surface culling algorithm, it basically works by means of progressive disclosure of parts of the level which are visible from the preceding render nodes. This is also a sequential iterative task, no matter if one chooses to use a BSP tree or sectors. It turns out that only certain specialized types of rendering tasks are easily parallelizable by dedicated ASIC hardware (e.g. vertex transformations, textured polygon fill, shading, postprocessing or some forms of raytracing/ray marching). The amount of causal dependencies pervading the way from a bunch of 3D geometry data to a complete rendered frame is such that it's only partially parallelizable, requiring some careful effort to keep your execution units synchronized, and the overall performance being limited by Amdahl's law. This and seeking for maximum flexibility is the main reason why my renderer is implemented in software; also any accelerated versions are expected to have the software renderer as a reference.

In addition, I require the pipeline to be deterministic and well-standardized on all supported hardware so the output frames could be used by the game logic when necessary. The engine also doesn't use a conventional Z-buffer, using span records instead and rendering game entities along lines of constant depth in a back-to-front order, what ensures correct order of transparency while keeping the renderer rather fast even without specific hardware acceleration (which normally requires to trade flexibility for speed).

Despite being written in C++17, the code relies on generic programming rather than object-oriented programming, with minimum amounts of machine abstraction and mostly manual memory management with grouping all similar items into arrays. Basically, the engine is a huge ass C program that utilizes C++ templates and member functions to reduce repetition and facilitate internal code reuse. Keeping the code as generic as possible helps greatly reduce its size (and subsequent bugs) and improve maintainability without sacrificing much speed. To ensure best performance, I've developed special built-in facilities for fixed-point arithmetics based on look-up tables which dramatically speed up the calculation of logarithms, square roots and other functions, yet maintain reasonable precision. I have also designed a custom character encoding and string object.

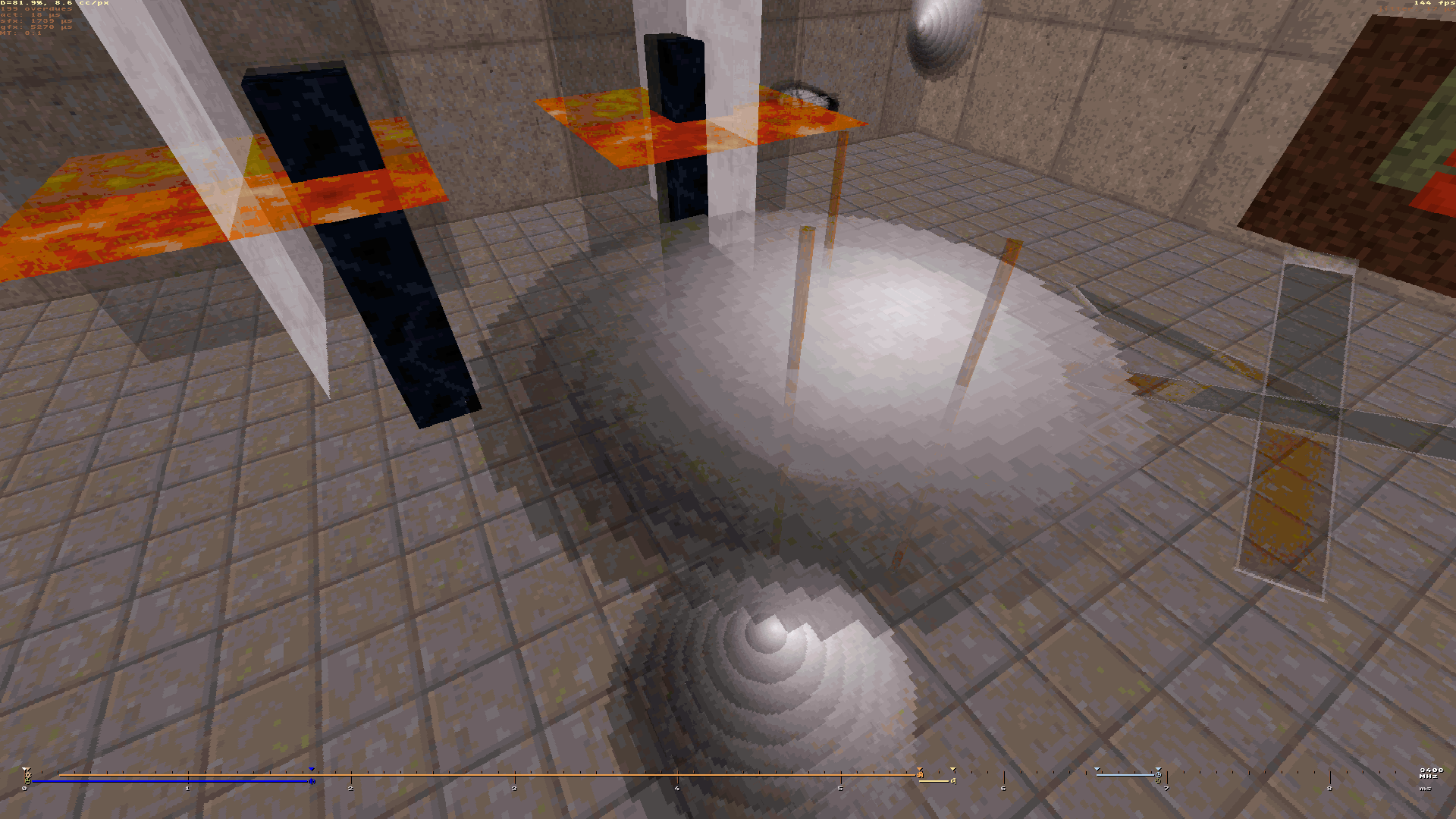

Engine progress

In early 2018, I've learned how to scan sector-based maps like the classic Build engine does, and began experimenting with various CPU-based rendering techniques. Later I managed to achieve free look with a correct perspective, native room-over-room rendering, true planar reflections with Fresnel falloff, anti-aliasing, a limited form of HDR rendering, post-processing effects, and more. With the multiprocessing engaged, the engine can easily surpass Build's performance on many-core processors. I'm still yet to do the physics part and proper 3D sound though.

The map format is sector-based like good ol' Build engine, but it was implemented from scratch and has evolved its principles far beyond with a ton of new features like heightmapping that can be used together with slopes, multitexturing with possible reuse of the same material on many surfaces, reusing the same floor or ceiling surface in multiple sectors, and referencing objects directly without tag attributes. I propose support for a variety of rendering techniques not limited to flat polygons only.

Now I'm developing a working prototype that features a renderer fully implemented in software (thus not restricted to specific hardware features), somewhat inspired by Ken Silverman's Build engine but written from scratch. Further iterations will bring even more capabilities and speed through integration with CUDA, Direct2D, ASIO or other powerful APIs. Eventually the engine (along with the underlying framework) could be ported to other environments based on x86-64 ISA if there's enough interest in doing that.

The Z-buffering technique has already been used in realtime 3D computer graphics for decades, proved itself as a viable way to solve the visibility problem on the level of individual pixels. Since the introduction of hardware 3D acceleration, the mainstream gaming industry has adopted this method to aid Z-culling, which can't be done consistently with simple polygon sort by their distance (the so-called painter's algorithm). Remembering a depth value for each screen pixel also enables for lots of useful post-effects, such as screen-space reflections and ambient occlusion modern engines can benefit from. However, as only one depth value is stored per pixel, the workability of Z-buffer is limited to the opaque geometry, and it turns to be a major downside, as various non-opaque stuff is becoming increasingly common in games.

To reduce the overdraw of opaque objects, one should normally sort the polygons from nearest to furthest one. The idea is that pixels behind ones already drawn will be discarded by Z-testing, and the earlier we plot the closest pixels, the more hidden stuff we will discard subsequently. However, as non-opaque polygons can be viewed through, they must be drawn in the far to near order after all the opaque geometry is done to minimize unwanted artifacts. And in cases of overlapping or intersecting non-opaque (translucent or alpha-channeled) objects, you're likely to get multiple depth conflicts within the same pixel, and that's where conventional Z-buffering always fails. Imagine a lengthy alpha-channeled projectile flying through an alpha-channeled obstacle such that only a part of it is closer to the viewer. There are possible workarounds such as depth peeling, which requires two Z-buffers and takes multiple passes to render everything behind transparent polygons separately, and the more layers of transparency you have got, the more passes it will require to yield the correct look. Needless to say how inefficient it can turn out for scenes complex enough.

Not being able to maintain the correct order of transparency is the fundamental deficiency of Z-buffering, and it is responsible for some ugly artifacts we encounter in lots of game products. Z-fighting is another nasty thing that keeps pursuing game developers. When working on my Brahma engine prototype renderer, I was confident about drawing everything along lines of constant depth, what seemed to be the optimal strategy for a software implementation with a slew of depth-dependent things such as fog and mipmapping. At some point I thought that if we augment this approach with processing all sprites and masks in the view at the same time, this will naturally grant us the ability to draw everything in a correct order without the need for any Z tests, allowing just to sort the objects according to their furthest point from the image plane.

Of course, this approach requires a more elaborate renderer pipeline which could keep track of a variable-size bunch of objects being concurrently rasterized. But as long as I already had some facility for multitexturing, extending it to support multiple objects was a reasonable and logical step. I'm still yet to make the masks and voxel sprites rendered in the same fashion, but you can watch this video to get a clue on how does it work in a real engine, and compare my results with the same map being run in EDuke32.

What is hybrid temporal-spatial anti-aliasing?

Feature 2 commentsFor 120+ Hz display devices, Brahma engine offers a very efficient anti-aliasing solution called 4xTSAA. Owing to subpixel precision of engine's geometry...

I have several questions:

1. is this some sort of heavily modified version of the Build Engine?

2. Will it be able to load 3D models in MDL (GoldSrc), MD2, MD3, MD5 (Doom 3) and IQM formats?

3. Will it support ragdoll physics for 3D models?

4. Can it be used to create games that are not in first person, but third person, isometric view, top-down view, and side scrollers?

Hi! As stated before, the engine and its ecosystem is coded from scratch (I use standard API without linking any third-party libraries). The basic principles are similar to Build Engine I took inspiration from, but the formats and internal structures have little in common.

The engine can currently import MDL and MD2 formats (mesh only, no animation yet). I'm going to support other formats you listed. You can help the project by providing me the specifications to formats you'd wish to be supported. Also I'm currently researching the possibility of decompiling BSP files into models or sector-based maps.

As soon as model animations and continuous physical simulations are working, ragdoll physics should be possible. I hope to use the same approach for ragdolls as for inverse cinematics.

I'm going to add a third-person camera after solving some issues with the first-person mode. As for other views and genres, the framework itself could potentially accommodate any games (search for NIP Minesweeper for a sample text-based game I made some years ago), although 2D games may need another renderer and map format. I've already prototyped a top-down/isometric renderer and some UI for an RTS game, but much of it remains in unfinished state.

Awesome, a test version!

Have downloaded and installed it, and it runs a Doom.wad so i could walk through the game. It was all still static, but, it showed the world. Skybox was odd and some screen terring was occuring but that has probably something to do with my settings.

That you released a beta/test/demo proves to me that you are still, after all these years, working very hard on this engine. And that things are coming along nicely.

Please keep working on this, as you do, and maybe one day this will be the new Eduke32/mapster !

greetings, a long time fan,

Leon

Thanks, Leon! Did you try to edit maps and game resources? The project is currently all about making content, not playing it, hence everything is static, despite the editor and the game itself coming in the same environment. Overall, this release is meant to show that the engine is not vaporware and is being in active development, even though much of it is incomplete at the moment.

I thought it's been impossible to get actual screen tearing using the legacy API starting with Windows Vista (DWM does its screen composition synchronized with vblank). However, microstuttering is still being an issue for me (I'll probably switch to a more robust API next time).

Wow, what an awesome video (Custom rasterizer for 3D models (WIP)).

One small remark is that when you are very close to the models then at

the edges of the screen they disappear. Not a big problem but it does

look a bit odd. Great models, by the way, especially that car model, really

awesome. Don't know if you made them yourself but if you did then that

is some great modeling. And then at the end, that skybox with an image all

around the player of the world. Fabulous!!

Leon

Thank you Leon!

As I noted in the description, those are some free models I found on Sketchfab and imported into the engine. I've come up with my own format for models that is both compact and versatile, also ought to be well standardized unlike many other formats.

The polygons may disappear near the edges due to imperfect Z-culling and frame projection postprocessing. I'm going to fix that and other artifacts as I further refine the renderer (and also add proper collision detection).

Sorry, i keep asking you this question every other year it seems, but, how far are you with development? Is your Brahma engine developed enough for use as engine in a (commercial) game? Because it seems you are now at a level that for instance the Build engine/Mapster32 can't reach.

Just very curious, and, also still interested in using it once it is finished.

greetings,

Leon

Thanks for asking about that! The engine has seen growing public interest lately. There was significant progress over the last year on the renderer, physics, engine tools and overall architecture. However, there are some fields that remain under-developed compared to others. For example, I'm somewhat held back by the lack of decent engine tools that have to have a GUI that has to use an upgraded event/string management system etc. Also I'm not satisfied with the functionality of my command-line interface. As everything needs to be implemented in a right sequence, it will take some time until actual games can be created. Engines normally take years to develop, even those done by experienced teams.

Thank you for explaining, will keep tracking your work.

success,

Leon

Wow, you clearly have worked hard! So many new functions and options added to your engine. Love the non-Euclidean portals!

Leon

Thanks for following my work, Leon! I wish I could implement all these features faster, but there are other things going around and sometimes I'm just not feeling good enough to be productive. Fortunately, my teammates help me a lot with game content I use for testing new stuff.

Also I'm going to capture some more videos to show off non-Euclidean maps and other cool things.

I know, i make maps/mods as you maybe know and it is not always easy to work on our projects. Sometimes we just don't feel like it, or normal life gets in our ways.

Leon