Wow, Steam release. The core roguelike community has formed the majority of Cogmind's player base for a long while, but now we've had a wave of new players from Steam so we'll have to see how this wider audience, and a larger portion of players new to the genre, may have affected some of the stats.

For the past couple years I've been reporting stats based on player-submitted score sheets, which also include some preferences and other settings (you can sample those linked from the leaderboards if you haven't seen one before). These "stat summaries" have simply been going to the forums High Scores thread, with the kinds of stats evolving over time along with an increase in total number and quality.

Today it's finally time to take a deeper look at the data... Doing this sometimes helps with future design decisions, but it's also just fun :)

I did something similar back in 2015 for the Alpha Challenge, a metrics analysis in 7,500 words and several dozen graphs, but it's been a long time and we now have a lot more data to work with, plus we can do some interesting before-after comparisons.

Overview

In ten weeks of Beta 3 following its release on Steam, 33,181 score sheets were uploaded, compared to around 15,000 in all of Cogmind's more than 2-year history of prior releases. We technically even lost some 2,000 Beta 3 score sheets one day shortly after launching on Steam due to a little incident with the leaderboards being overwhelmed :P (wasn't designed for that kind of load! I did fix it the next day, though)

26,478 runs scored at least 500 points, the minimum required to be included in the scoring data. 65.2% of those runs were submitted anonymously, meaning the player did not set a Player Name in their options. This compared to a mere 5.4% for Beta 2. But we can account for that change with two main factors:

- As of Beta 3 score uploads were changed from opt-in to opt-out, meaning a lot more submissions by players who otherwise wouldn't bother manually activating it in the options (or even know it was an option in the first place).

- Even though Cogmind uses non-Steam leaderboards, Steam players probably assume their in-game username is set automatically based on their account, which is not the case. (I've considered adding this feature, but decided not to for now.)

Anyway, lots of anonymous scores. Since we have so many non-anonymous submissions now, as of Beta 3 I removed anonymous scores from the leaderboards themselves, though they're still included in stats below except where mentioned. Overall 1,287 unique non-anonymous players submitted scores, as well as 4,389 unique anonymous players you don't see on the leaderboards. 342 initially anonymous players later set their Player Name and appeared normally.

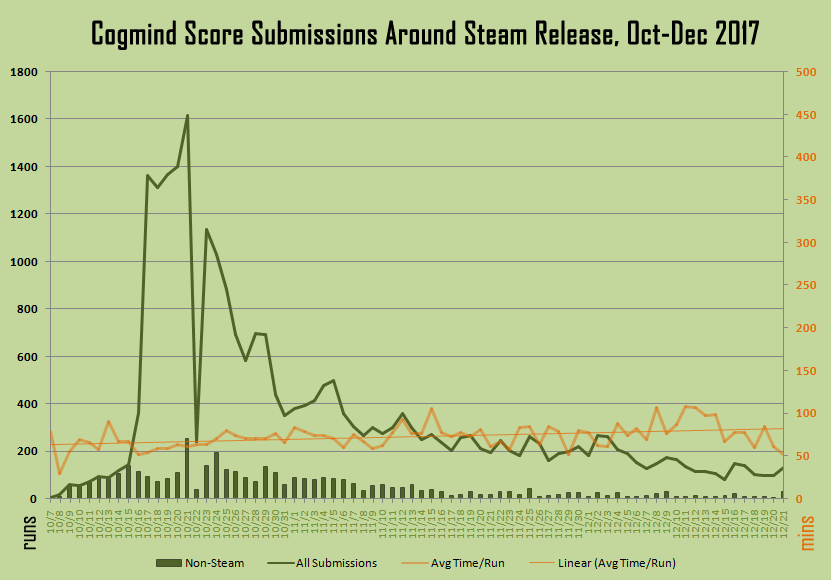

Counting only those runs included in the data (26k), you can see how the submissions gradually decline following the Steam release. (You can also clearly see the effect of the leaderboard system crapping out that day xD)

Some of the decline can of course be attributed to be people trying the game out then waiting on it because it's "EA" or they have other things to do, and also because many players gradually improve their skills over time and survive for longer periods, leading to fewer submissions overall. As you can see I added average run length (in minutes) to the graph, and the trend line for that rises from 62.5 minutes to 83.8 minutes, a 34.1% increase across two and a half months.

Both off and on Steam, Beta 3 was played for a total of at least 1,541,666 minutes (25,694 hours!), though I excluded a number of suspect high-time records--Cogmind's run length records exclude idle time, but are also on rare occasions susceptible to oddities in a few system environments. And of course we don't have data for players who are completely offline or have deactivated uploading, and I also didn't tally sub-500 score sheets.

Scores and Wins

There was a steep drop in average score, to be expected with so many new players. It reversed the trend we've had over the past year, where scores were generally going up as 1) the world grew wider, 2) more sources of bonus points were added and 3) regular players got better and better. Alpha 14 to Beta 1 saw an 11% increase in average score (to 8,035), for example, then up another 5.3% to 8,461 during Beta 2. With Beta 3? Average score fell 53% to 4,503! :P

I'm sure we'll see it rise again later into 2018.

There was, however, a fairly large raw number of wins. In the 29 months prior to Beta 3 players won a total 292 times, while during just the Beta 3 period there were 139 winning runs. Among the rapidly expanding player base we're seeing seriously dedicated new experts, some clocking hundreds of hours in just the past couple months.

There are seven different endings in Cogmind, and a few players have discovered them all, but during Beta 3 the spectrum of wins only covered the first four types. 79% of wins were the "default" ending, 1% were special ending #1, 11% were #2, and 9% were #3. Maybe we'll see some endings 4/5/6 in Beta 4 :) (these are the most challenging, two in particular are for super powerful builds).

Now that we actually have some players using the lower difficulty modes, it's also more meaningful to look at how many of those are winning. Wins by difficulty mode:

- Default: 101

- Easier: 4

- Easiest: 13

Comparing the number of winning players vs. the total playing each mode, the numbers look like what one might expect, so the modes are apparently doing their job. Winning player ratio by mode:

- Default: 38/1,183 (3.2%)

- Easier: 4/52 (7.7%)

- Easiest: 9/52 (17.3%)

The latter two modes are mostly composed of very new players so in future versions I would expect the win rate there to rise even faster than the default assuming no new large influx of players (and that players don't decide to move up to a higher difficulty).

I excluded 15 anonymous Roguelike Mode wins, and 6 anonymous Easiest Mode wins, from the above data. Altogether, 51 (4% of) unique non-anonymous players won at least one run of Beta 3.

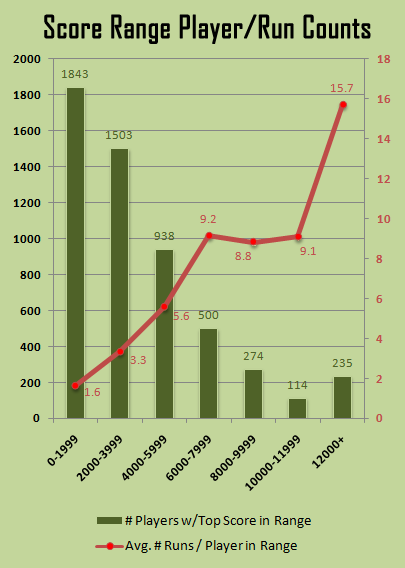

Looking at all players and score in general, the trend is what one would expect:

Players who tend to score low (which usually but doesn't always mean dying earlier) also played fewer games on average. Playing more games clearly leads to improvement in skill level, although it's interesting to note that flat area in the middle of the run count line, which probably reflects a combination of players who are just naturally better and also those who were playing in previous Betas/Alphas and already more skilled to begin with.

Difficulty Modes

Only a small number of players (27; 2%) ever changed their difficulty mode, in most cases (91%) to lower it. Only one player jumped their difficulty level two notches, twice a player raised it by one level, and 17 lowered it by one. 15 players dropped their difficulty from the default to Easiest.

This data is only looking at non-anonymous records, so technically only 22.7% of players, partially because these are players spending more time with the game, rather than many anonymous players who generally do fewer runs anyway before stopping for now.

Steam vs. Non-Steam

Cogmind can be purchased from two different stores, mine and Steam's, and score sheets indicate which build they were uploaded by (I have a separate build for each), so in all this data we can also look for any differences between the two sources.

During this Beta, 956 non-Steam players finished 4,029 runs, while 4,457 players on Steam finished 22,450 runs. Thus 82.3% of players are using Steam, and playing 84.8% of the runs. A fair number of Linux and Mac players use the DRM-free option (since it's not supported on Steam), as does anyone who just doesn't want to use Steam or prefers to buy direct from developers, together boosting the non-Steam numbers by a good bit.

To my knowledge the majority of the experts switched over to Steam release, so maybe that helps account for the fact that the average score among Steam players was 4,386 (median 3,016), vs. 3,975 (median 2,751) off Steam.

I'll be looking at more at Steam vs. non-Steam data when we get to the preferences later.

Exploration

One of the main draws of Cogmind is exploring the expansive foreign world, so let's see how far into it players have managed to get so far.

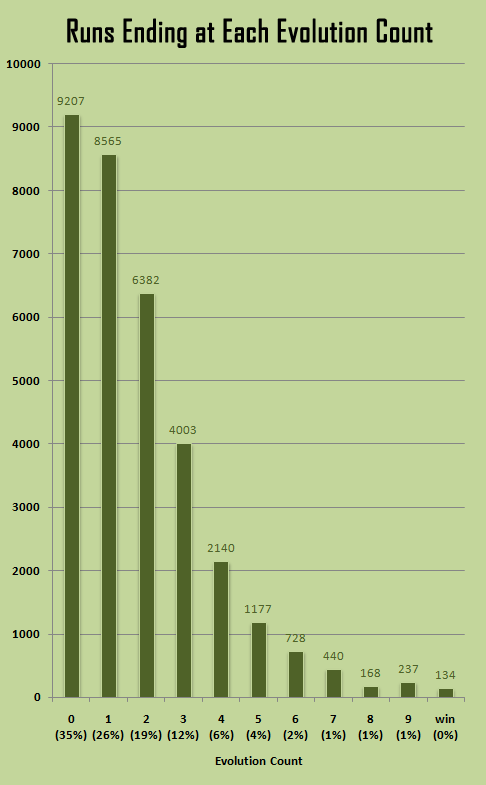

Tallied on a per-run basis we can see that a full third of runs end on the first map. That's where you're at your weakest, and especially when inexperienced a few wrong moves can potentially lead to a downward attrition spiral since core integrity is still not all that high and coverage is minimal (lowest slot count).

A still-high quarter of runs end in the floor after that (-9, following the first evolution). Core integrity and coverage are still somewhat low, but most importantly this is the first floor into which Cogmind may enter in a suboptimal state, e.g. partially or totally naked (coming into the first floor this isn't an issue) without much in the way of spare parts. That's the most disadvantageous situation to be in, and it's even more likely here because you may have even entered after passing through the unpredictable and potentially dangerous Mines. This and the initial appearance of Sentries makes the second floor just about as tough as the first for new players.

I'm pretty sure the percentage of runs ending on the first map will shrink with Beta 4 since both its size and difficulty were reduced to not be quite such a harsh early gateway for new players, and to streamline it even more for experienced players. The other two Materials floors also got some tweaks to make them a little easier to survive, so together with the opening floor changes we'll be seeing that whole early-game peak flattening out a bit (though just as much from the fact that we won't have quite such a surge of new players as we did during Beta 3).

Beyond the early-game it's an understandably rapid descending graph with fewer and fewer runs surviving as the difficulty ramps up over distance--difficulty increases rather quickly in Cogmind :)

The final depth at evolution 9 is naturally the most difficult so there's an uptick of deaths there. By contrast, anyone who can survive the first Research level at evolution 7 can probably also make it through 8. That said, the area is tough enough that many players enter the final map underpowered, accounting for some of the extra deaths there (some of the rest are opting to take on extended game content and don't pull off the win).

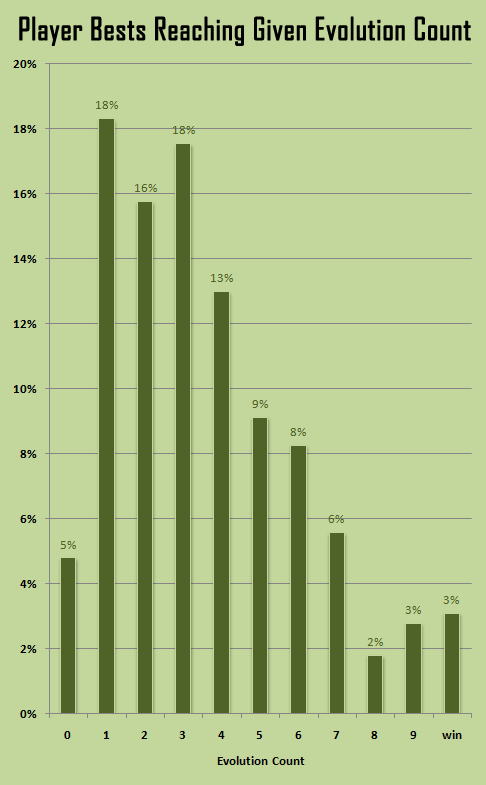

Overall, this related graph tells a less gruesome picture:

Taking only the best run from each player, we can see a more reasonable gradual slope, though the first half of the game is clearly still a barrier to the majority of players (70%).

This is yet more reason to continue adding content to keep players who are stuck there entertained :P. (Cogmind's content is mid/late-game heavy, greatly increasing with each new depth, so there's definitely room to expand the early game which is something I'll turn my attention to again later.) However, for really accurate data there we'd technically have to factor out a bunch of low-run players. Remember the run counts per player graph from earlier--a lot of the people at the low end have only actually played 2-3 runs on average, which is not usually enough to learn all the most important survival tactics. This graph's peak would naturally flatten out if those people played a few more runs, but many just bought and tested out the game for a bit, and will come back later.

The slight reversal of the trend in the late-game reflects the idea that many who can at least make it to that hardest segment of the world probably won't take too long to figure out how to push through to the end, especially with... help from branches :D

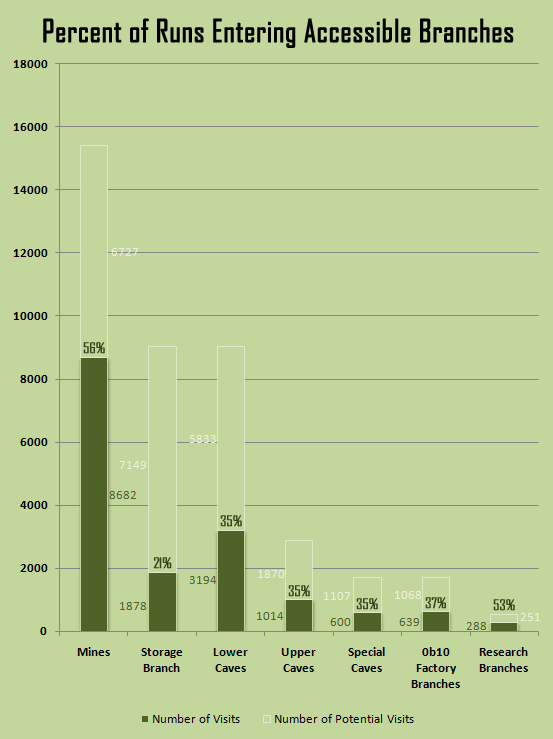

86% of wins visited special branch maps that, to put it generally, significantly increase Cogmind's chance of survival in the long run. Not that all branches are created equal--the easily accessible maps aren't quite as useful as what's found in deeper or more dangerous areas.

Pretty much all runs that make it out of the early game hit at least one branch or another, even if not looking for them.

The above graph shows the percentage of runs which actually visited any map in the given category of branches as a portion of the total number of runs to reach the depths at which those branches were available. For example more than half of players who could enter the Mines did so (many probably newer players entering somewhat unintentionally, although there are certainly players who enjoy taking Mines exits now that they're not so deadly and can come with more perks).

The Storage branch ratio is especially low because it has fewer entrances than the average branch listed here. And the Research branches are especially high (53%!) because many of the players who can reach those late-game areas know how valuable (and fun) they are.

Speaking of optional maps, while browsing the stats it was interesting to note that 23 times players passed right through a special secret cave area and didn't realize it. Also only 2 players visited the world's most difficult area to reach.

A few plot-related stats, described in cryptic terms to avoid spoilers:

- Cogmind was imprinted in 3.7% of all runs; players in 84 runs decided not to imprint despite having the chance. 31.6% of winning runs were imprinted, with 8 winners (5.8%) choosing not to imprint. Get your imprinting in during Beta 4 runs, because it's going to be nerfed in Beta 5 to make it a more strategic decision with additional long-term implications (rather than a no-brainer).

- 12 runs interfaced with DC and went on to win, of 68 total runs choosing to do so.

- 18 winning Cogminds visited W, of 41 visits in all (43.9%).

- 24 wins were achieved with a reset core, out of 101 total resets (23.8%).

With all the fresh Cogminds, average lore collection across all reporting players took a dive to only 6%, as did the item gallery collection rate which now averages 14%. That said, congratulations to GJ, the first (and still only) player to discover every single item in the game, and also every piece of lore! That's a heck of a lot of stuff, but someone's finally done it--I'll just have to add more to keep him on his toes :P

Meta/Preferences

One of the areas I most enjoy comparing across releases is player preferences, and of course we can expect some significant changes with the addition of a Steam version accessible to a wider audience.

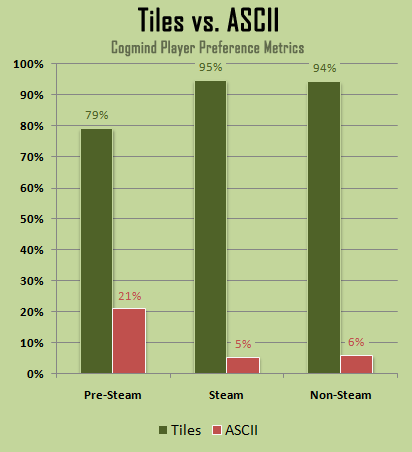

Probably one of the biggest questions of preference when it comes to traditional roguelikes is "Tiles or ASCII?" In many cases both are supported, and Cogmind was even designed for ASCII with an ASCII-like tileset only added two years into development. But as we know a lot of players have trouble getting into ASCII, so in the big picture tilesets will always win out. The question is by how much.

Prior to Steam 21% of players were using ASCII mode, a pretty good chunk but still a clear minority. With Steam that dropped from one in five to one in twenty players (5%). We'll see similar trends with many other preferences below.

Note that in these graphs, "pre-Steam" data uses Beta 2 records, which are largely representative of the community we've had going for the past couple years. For most graphs I've also separated out Steam vs. Non-Steam players in order to examine whether there are any notable differences there. In hindsight it seems non-Steam players show no major deviations from Steam players in terms of preferences, suggesting that the post-Steam trends are a result of simply having a wider audience, and not particular to the Steam community itself.

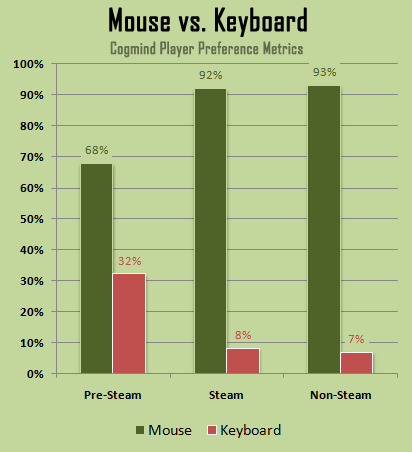

Not as many traditional roguelikes support the mouse as thoroughly as Cogmind does, but I'd argue that any roguelike wanting maximum exposure and enjoyment should include full mouse support in its design. Of course I don't really have to argue it, because everyone will probably agree anyway, and in any case there are the facts graphed right there :P

Throughout Alpha and early Beta, about a third of players didn't use their mouse at all, but now that's dropped to 7-8%.

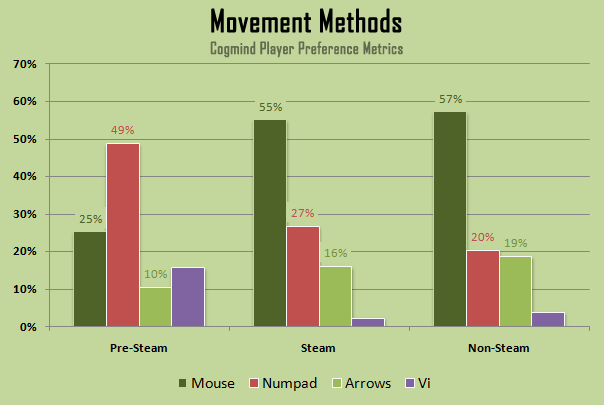

As for movement methods, mouse is also king, though notice that even following the Steam release more than a third of players still use the keyboard to move. In Cogmind there is often a need for tactical space-by-space movement, even outside of combat, and even some players who otherwise use the mouse to select targets, get info, or manage their inventory are still open to one of the keyboard-based movement methods.

Before Steam a surprisingly low 25% of players used the mouse for movement. At the time players submitting scores were mostly more experienced (or those very familiar with roguelikes) and would like to exercise maximum precision without fear of misclicks.

Among the keyboard movement methods, as expected numpad is the most popular. Out of curiosity I always keep a close eye on vi key usage, and although pre-Steam vi usage generally hovered around 15-16% and is now below 5%, it's interesting to note that twice as high a percentage of non-Steam players use vi as those on Steam. (There are 136 of you--more than dozens! :P) I believe one of the biggest factors here is Linux players, who to my knowledge are almost exclusively using the DRM-free version, and the same crowd also tends to be more familiar with the vi layout.

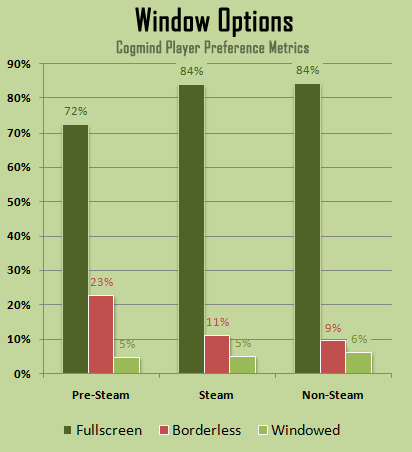

No huge differences to see in window settings, although based on these graphs I'd say a lot of newer players probably just don't realize there is a borderless window option yet :). We'll probably be seeing borderless fullscreen rising later on, unless there really are that many more people without multiple monitors among the wider gaming population compared to what we had pre-Steam.

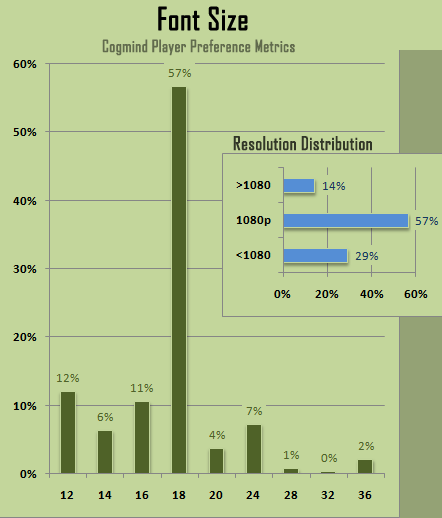

For font sizes I've only shown post-Steam values. As before, size 18 is by far the most common, generally equivalent to 1080p.

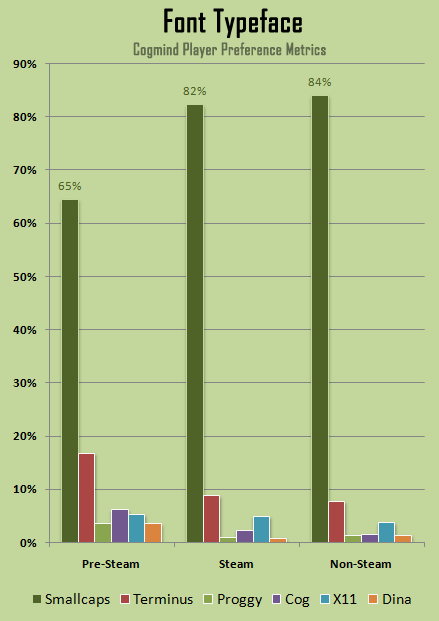

The vast majority of players are sticking with Cogmind's default typeface (Smallcaps), and as usual Terminus is the second most popular. Interestingly X11 took over as third most popular, attracting a lot more attention on Steam than any of the other remaining options. Also interesting is that Cog slipped pretty far from its pre-Steam third place spot, which we can almost certainly attribute to the fact that it was Cogmind's original default font back in early development, and a greater number of regular players had gotten used to it, but now it requires that players manually select it over the others.

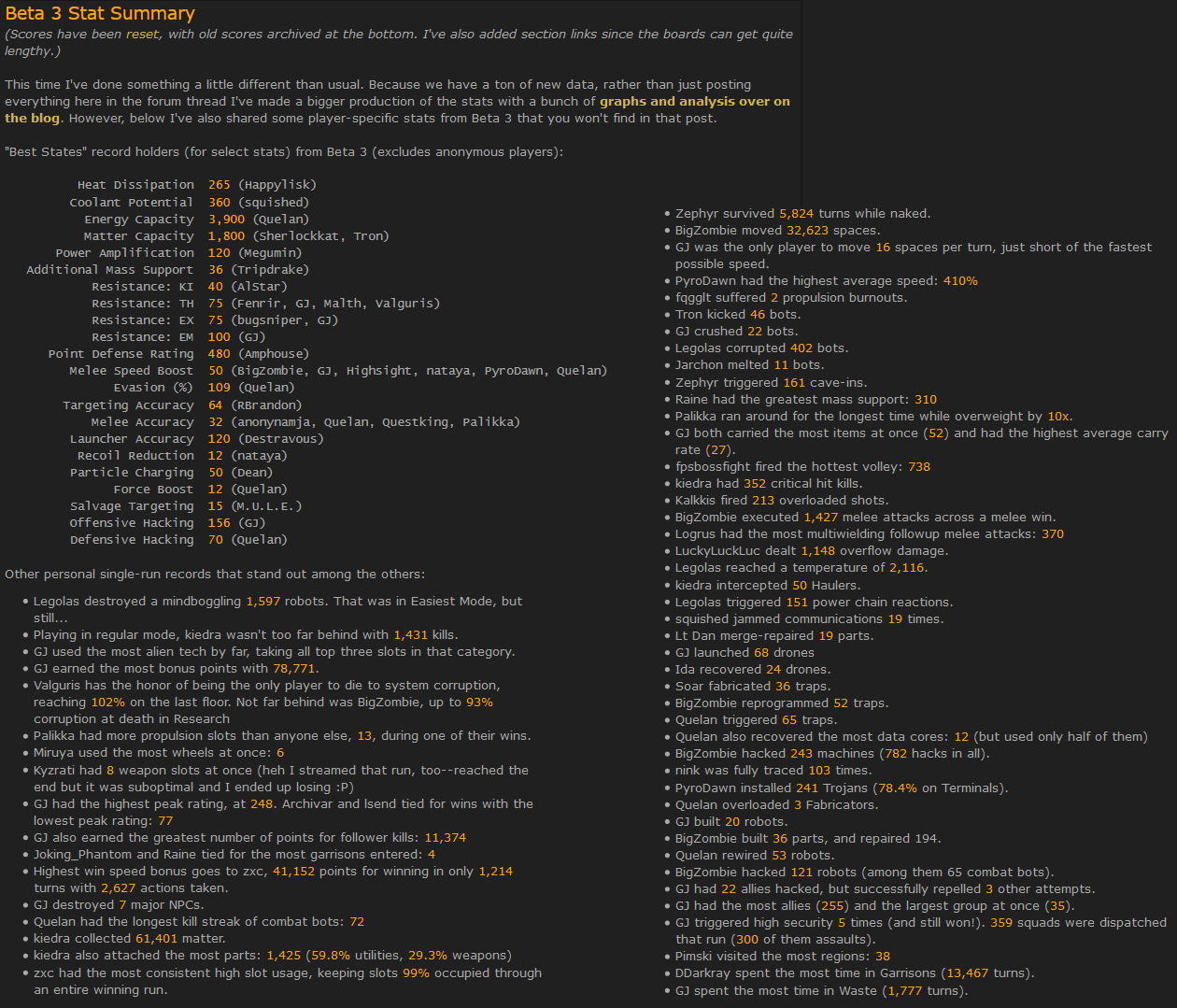

I also compiled a lot more player-specific stats over on the forums:

Note that some numbers in this post may not always be perfectly comparable across different data sets and analyses. I was using numerous different spreadsheets to work with the data from multiple sources, and sometimes excluded subcategories of data for various reasons. Even if not always fully accurate or comparable, the data does meaningfully reflect general trends.