Here's a Natural Selection 1 level rendered in our engine:

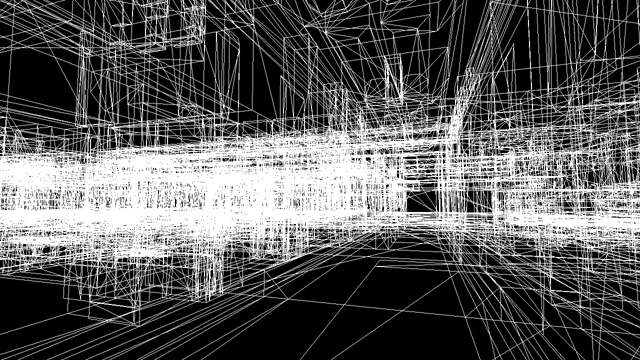

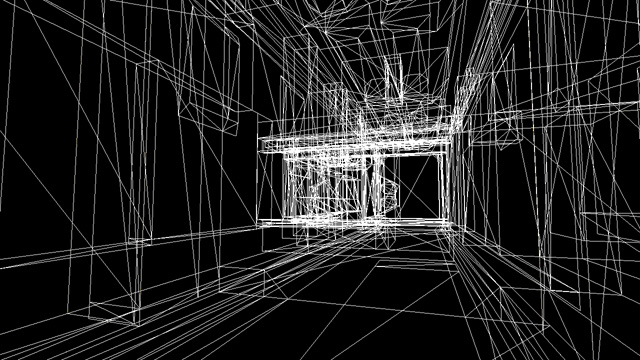

Even with the textures missing, some of you will probably recognize this spot. While this may look like a simple hallway, to the rendering engine it looks a lot more complicated. Here's the same scene rendered in wireframe mode:

As you can see, there's lots of stuff behind those walls that's being rendered, but which we don't actually see. In many first person engines this problem is solved by precomputing information throughout the level about what's visible; this is known as a potentially visible set (PVS).

If you've ever done any mapping for the Quake or Half-Life engines, you're probably familiar with the "vis" tool which performs this precomputation. This can be a slow process that requires a lot of tweaking and careful modeling. Because the data is precomputed, the environment can't change much at run-time. And finally the PVS method is not very good when it comes to outdoor scenes. To alleviate all of these problems, we've chosen to use a hardware assisted occlusion culling method that doesn't require precomputation.

Here's what the scene looks like with the occlusion culling system enabled:

As expected, most of the invisible geometry has been discarded during the rendering process. Because of way the algorithm utilizes the graphics card, the structure of the level doesn't really affect the system. It works with outdoor environments as well as indoor environments and can handle polygons "soups" -- collections of polygons that don't really have any structure at all.

While real-time occlusion culling has some additional performance cost over precomputed systems like PVS, we think the benefits more than make up for it. And with clever optimizations, that cost can be reduced substantially.

For those that are technical minded and would like to learn more occlusion culling, our system is an implementation of the CHC++ algorithm (paper available online here).

Comment on this

Good stuff. Always good to know that good optimizations are being implemented. Maybe my computer will run this at a decent frame rate. =O

indeed!

oops omg sorry Spector i accidently [-] your post instead of [+] please forgive me....

Nice, it is always a good thing to know info like this... go NS2...

Very Nice! I always say great occlusion culling makes a great levels. Great to see you guys go as far as making your own tools. Looking froward to NS2.

looking great, as was expected from you guys! :D What you guys have done with the occlusion culling sounds fantastic already. Really can't wait to see this in action. You should release a video of running through the level in wireframe! :)

Looking forward to more news!

Haven't you been making Natural Selection 2 forever? Why do we get this very basic info, isn't there anything more interesting to see, yet?

I really wonder what's gonna come out of NS2, as the first part was a great game, but time doesn't go easy on old concepts. Better hurry up a little, or you will be dead on release.

Using the Occlusion Test Extension ( don't know how this is called in DirectX ) it looks like. The performance hits comes from the feedback nature of this system ( reading back infos from the graphic card stalls the render pipeline ). I'm a bit astonished this should yield alone a speed up since many tests showed so far that relying on HW-Occlusion testing alone won't gain anything. Some profiling numbers you can produce? Just curious to know since my numbers support the stance towards HW-Occlusion being slower.

great after months you came with occlusion culling???

isn't news supposed to be about the game?

hey genius. it's about the game engine. you need a game engine to make a game.

...have you made the connection yet?

umm lol of course you need an engine but adding occlusion culling to your engine and thinking it is a ground breaking feature is priceless. nowadays it is like "hey look I added 3d rendering"

anyway sorry for not being a natural selection fan.

Yeh it sure is good with optimizaton, but this isnt really anything revolutionary? :S I mean, most mappers know this stuff xD

probably they have nothing else good to show

sadly...

Mappers know its there. They don't know how it works.

ah right, and just so you know, progress IS getting done, they're just being very careful about releasing media, particularly when it comes to art direction or game design decisions. updates on the technical side just happen to be the 'safest'.

how you know there is progress: follow their twitter for most recent updates - Twitter.com - the team updates it very often.

and there's a discussion thread for the twitter updates here Unknownworlds.com so register if you wanna chime in.

I think Harimau summed it up best. I know that to many people, occlusion culling isn't the most exciting news. However, the team is being very tight on their juciest info. This is on purpose, not for a lack of interesting news. StarCraft 2 is about to go beta, and there are things people didn't know for a while, and other things are still changing every day. Does that mean SC2 was boring and/or early in development? No, it simply means that the developers wished to keep a tight lid on what is sure to be a killer game. This goes for NS2 as well.

Just like everyone here, thanks. I love to see really good explanations such as yours on how the game is made. I'd love to see this kind of thing from most developers.