[w]tech - Voxel based terrain layers via Deferred Rendering

In our last news we introduced our new terrain, based on voxels. Now there are also various material layers possible. Different to many other engines, which produce their terrains through voxels, ours offers both transparent layers and theoretically also several shader per layer. One can apply the layers even with a brush, similar to the normal heightmap terrains! So, complete freedom in terrain building!

First of all, we would like to introduce a video of the whole to you:

( Youtube.com <- HD + Fullscreen)

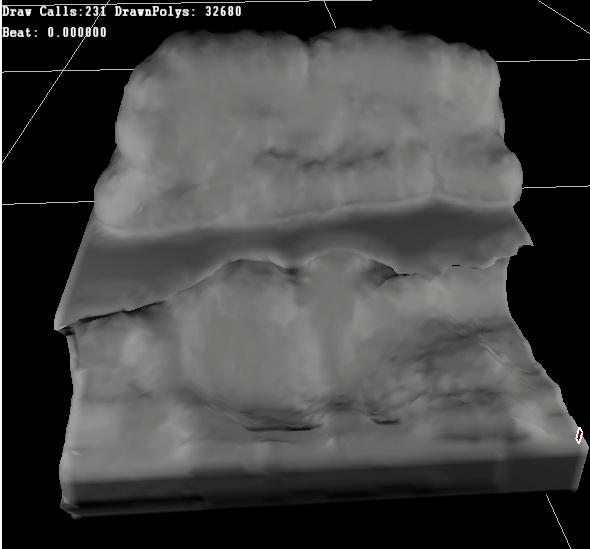

As you now have seen what is possible, for some there will pop up the question: “How does it work?” Therefore I will shortly explain how we were able to do this. Here we have a picture of a still white terrain without any layer diversity:

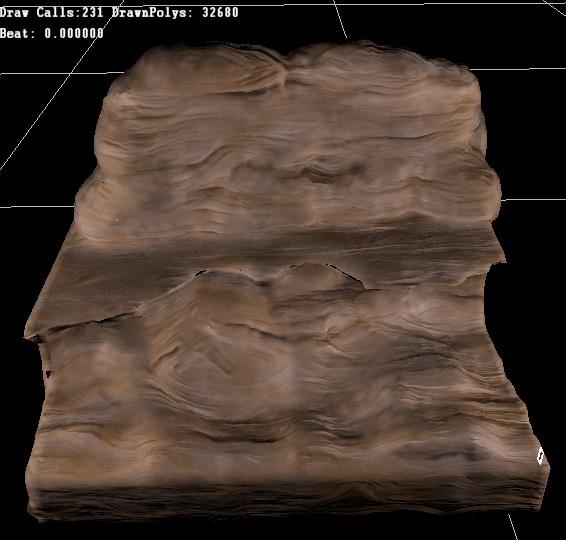

After having supplied the canyon with an adequate shader material he looks like that:

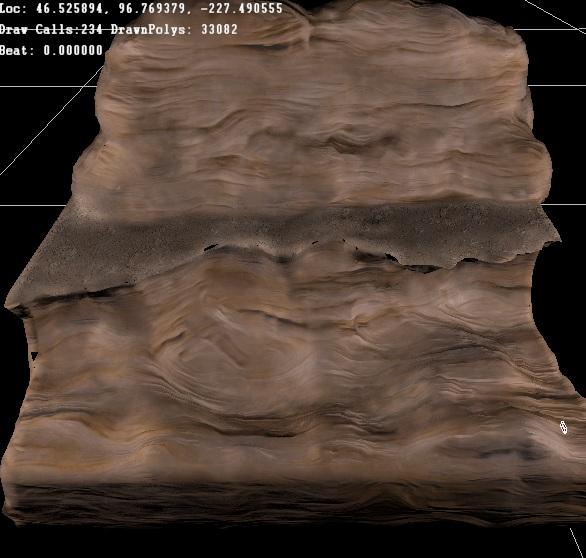

Currently, just the basic layer is used; the terrain was just assigned a shader. Its texture are projected triplanar on the surface. Due to that, one can get along without UV maps, an explicit explication below. It looks almost likable, but for the cliff, on which one should walk, we need a visible path. Now the brush paint function comes into play. Since the first layer is completely visible, it is also fairly unspectacular concerning the saving of the information. But what is behind this one?

One can see a path of sand. Every layer has its own voxel volume. What that is, I have explained in the last news. Well, we thus have a volume for the geometry and one for our second layer. If the mesh is now generated newly out of the voxels, we have the possibility to attach every vertex some data.

For the terrain at least 2 slots have to be filled. This would be a three-dimensional vector, that determines the position of the vertex; and another 3D vector for its normal.

Here, we don’t need texture coordinates or tangent. But the slots are still existing. So, this creates a 2D vector for the UVs and a 3D vector for the tangent. In every free space we can situate data.

UV.x=Layer1

UV.y=Layer2

Tangent.x=Layer3

Tangent.y=Layer4

Tangent.z=Layer5

This is summed up a maximum of 5 layers (which can be extended later). And wherefrom one knows which vertex gets which layer data? Quite simply: One takes the position of the vertex, enlarge it into the right proportions and goes through all layer volumes. Thereby, one can directly access the data, that lies at the vertex, and put them into the slot.

Now we got our layers in our mesh and can access them in a shader. But not for drawing any rocks, because what we need is the possibility to have more shaders, to not pack them up in one.

One option would be to render the terrain for every shader once and to blend the results into each other. This technique is called Forward Rendering. However, the problem thereby is: Every layer means about 200 drawcalls and roughly 30.000 polygones more (what basically depends on the dimension of the terrain). Rather suboptimal.

What we do instead is, to write the depth, the normals and the data of the layers into render targets (textures) to write (See Deferred rendering News about Lighting). Why this is important follows immediately.

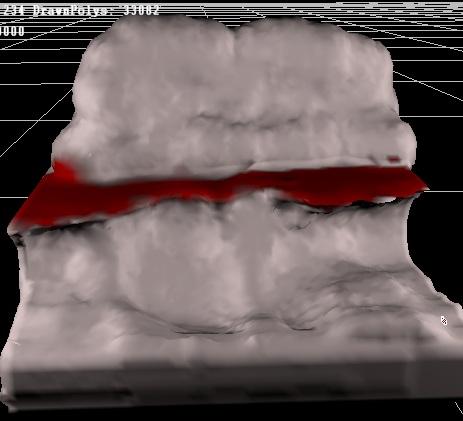

In the layers it looks like this:

The red line is our way, but the lighting needs to be thought away here though. The next step is the famous full-screen quad. So, just render a square across the full screen - with our layer-shader on it.

Now we come to the point where the depth and the normals become important: Since it has no UVs, we have to call back the so-called "TriPlanar Texturing". This sets a texture from three sides over the mesh and seeks for the most suitable side for the currently active pixel. Therefore, we need both the normals of the terrain, as well as the depth from which we can calculate the world space position of the vertices. Since we also have the data of the terrain, we can let it look in full screen shader, as if we had actually rendered directly on the terrain.

Additionally, we also want lighting on our terrain. Thus, depth, normals, and other required things are blended onto the render targets that we use for the normal deferred rendering for the light.

Rocks, stones or grass (or whatever), that are calculated in the shader, are also blended onto the quite normal render target.

Now that we got everything back in some "familiar" areas we can proceed as usual with the rendering of the scene.

Shortly to another topic: We would like to introduce our new team member Nizza aka Michael and warmly welcome him. In the future, he is going to undertake two tasks. On the one hand he will be our project manager and take care of us concentrating on our core business. On the other hand, he will act as an interconnection to the outside and handle news and applicants. On behalf of the [w]tech Team we cordially welcome you and look forward to working with you.

I hope those who have made till the end of this news have understood everything, and they perhaps can even drag a benefit out of it.

In this sense, till the next news!

![[w]tech](https://media.moddb.com/cache/images/engines/1/1/146/crop_120x90/Preview_moddb.jpg)

![[w]tech team](https://media.moddb.com/cache/images/groups/1/1/997/crop_120x90/wtechteam_preview.jpg)

cool

excellent!

Very impressive! Nice work