The Z-buffering technique has already been used in realtime 3D computer graphics for decades, proved itself as a viable way to solve the visibility problem on the level of individual pixels. Since the introduction of hardware 3D acceleration, the mainstream gaming industry has adopted this method to aid Z-culling, which can't be done consistently with simple polygon sort by their distance (the so-called painter's algorithm). Remembering a depth value for each screen pixel also enables for lots of useful post-effects, such as screen-space reflections and ambient occlusion modern engines can benefit from. However, as only one depth value is stored per pixel, the workability of Z-buffer is limited to the opaque geometry, and it turns to be a major downside, as various non-opaque stuff is becoming increasingly common in games.

To reduce the overdraw of opaque objects, one should normally sort the polygons from nearest to furthest one. The idea is that pixels behind ones already drawn will be discarded by Z-testing, and the earlier we plot the closest pixels, the more hidden stuff we will discard subsequently. However, as non-opaque polygons can be viewed through, they must be drawn in the far to near order after all the opaque geometry is done to minimize unwanted artifacts. And in cases of overlapping or intersecting non-opaque (translucent or alpha-channeled) objects, you're likely to get multiple depth conflicts within the same pixel, and that's where conventional Z-buffering always fails. Imagine a lengthy alpha-channeled projectile flying through an alpha-channeled obstacle such that only a part of it is closer to the viewer. There are possible workarounds such as depth peeling, which requires two Z-buffers and takes multiple passes to render everything behind transparent polygons separately, and the more layers of transparency you have got, the more passes it will require to yield the correct look. Needless to say how inefficient it can turn out for scenes complex enough.

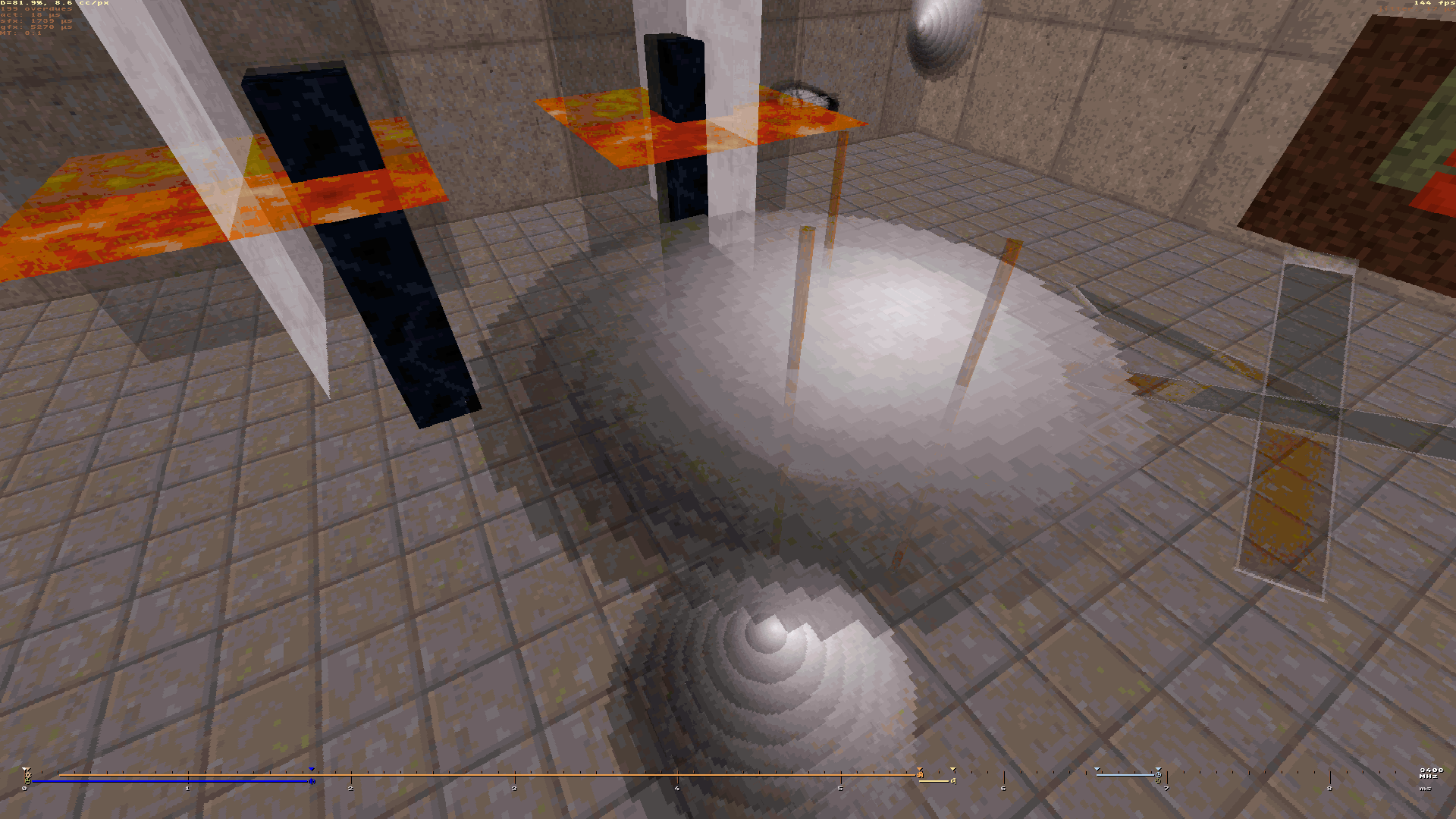

Not being able to maintain the correct order of transparency is the fundamental deficiency of Z-buffering, and it is responsible for some ugly artifacts we encounter in lots of game products. Z-fighting is another nasty thing that keeps pursuing game developers. When working on my Brahma engine prototype renderer, I was confident about drawing everything along lines of constant depth, what seemed to be the optimal strategy for a software implementation with a slew of depth-dependent things such as fog and mipmapping. At some point I thought that if we augment this approach with processing all sprites and masks in the view at the same time, this will naturally grant us the ability to draw everything in a correct order without the need for any Z tests, allowing just to sort the objects according to their furthest point from the image plane.

Of course, this approach requires a more elaborate renderer pipeline which could keep track of a variable-size bunch of objects being concurrently rasterized. But as long as I already had some facility for multitexturing, extending it to support multiple objects was a reasonable and logical step. I'm still yet to make the masks and voxel sprites rendered in the same fashion, but you can watch this video to get a clue on how does it work in a real engine, and compare my results with the same map being run in EDuke32.