Hey All!

This week we will talk about something very cool we've been doing. PLAYTESTING!

This is by no means an comprehensive guide on how to do Playtesting, it's all very much focused on Okhlos, but I think we ran into some interesting bits, that might help on different projects, so we wanted to share them.

Let's begin!

What is Playtesting?

When we started asking people to take part in the Playtesting, we found that most of the people didn't know what "they had to do", or even if they were prepared to do it. A huge amount of people believe that Playtesting and QA Testing (or Functional Testing) are the same thing. And, of course, they are not.

Playtesting is a kind of usability test, where you can see how players read and react to the game in an (ideally) relaxed context. The idea is to see the player interacting in the way she/he would normally play the game. But with us breathing over their shoulders and taking silly incomprensible notes.

This is particularly useful to, for example, polish a tutorial. We always try to get people that have never played the game, so that we can see if the tutorial is really effective at communicating the mechanics, or if we have to be more clear in some areas. Also it's really useful to help set up a good difficulty curve, and to check how good the feedback the game gives to the players is .

We've showed the game at lots of events. And seeing new people play the game is always useful, but when you are at an expo, the players don't usually pay too much attention to it, they just want to try it. While playtesting the game at expos can point out some design flaws, you really need to sit down and analize what's going on, and what would happen if they played the game at home, instead of in a loud and flashy (and sometimes smelly) environment.

Why we do it

We did very small amounts of playtesting during the development of Okhlos. Mostly at events. Sometime we'd invite someone to try it, or send a build to a friend and ask for notes. We never did a huge playtesting session before.

We were getting close to the launch (and we still are!) and we were having some discussions (friendly, of course) with Devolver regarding the difficulty of the game.

We had been playing the game for so long, that it was almost impossible for us to judge the difficulty of the game by ourselves. For us, it was always was too easy. Devolver pointed out that the difficulty curve at the first levels was too high. And it seemed that there was a large group of players having difficulties with the game.

Sending a game and asking for feedback is not very useful because games are difficult to put in words. When you see the playthrough is when you realize that someone is not getting the idea correctly, or even that you might be asking the wrong questions. You should pay attention to the user session, what you discuss with them afterwards is just for ratification, but you are the one that has to identify problems and solutions. If someone says "I don't like this, maybe you should..." you can stop listening there. You know your game better and your playtester should only communicate the problems they had, not the solutions (also, this depends on your group. We had very few designers for this playtesting intentionally).

We decided to do a Playtesting session in order to see what was going on regarding difficulty. Any additional information we could gather (tutorial, mechanics and feedback) would not be the focus, but a very welcome bonus.

How we did the setup

We started by reaching out on Facebook to any people interested in trying out the game (through our personal Facebook, not the Studio page just to be sure that at least we knew the people coming to our offices).

Because of this misconception regarding playtesting and functional testing, we had to stress a few times that no knowledge was needed in order to take part in the Playtesting sessions. We looked for acquaintances that had never tried the game before and could drop by our offices to play a little.

The playtesting sessions went out of hands pretty quickly, Too many people wanted to try the game and give us feedback, so we had to narrow the list to twenty something people.

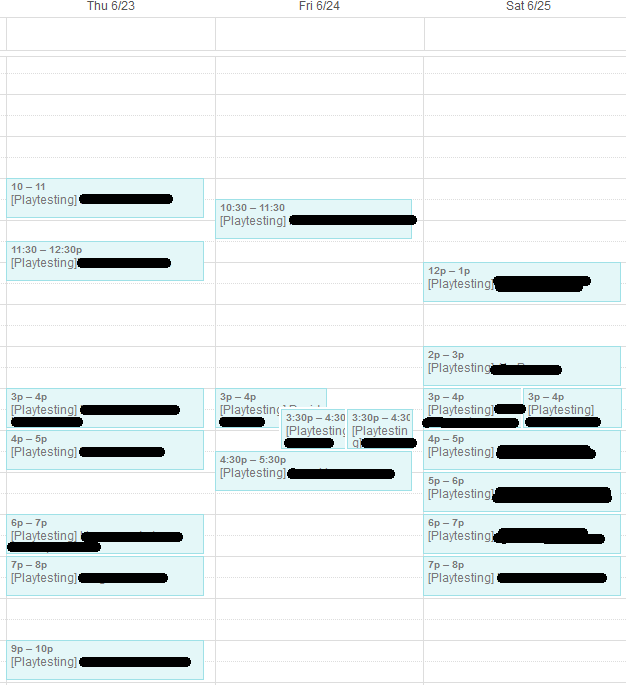

The session took us literally all week and because of their jobs people, lots of them wanted to come on the weekend.

A cool thing we did from the start, was trying to set every session pretty far from each other. At first, we didn't know how much each session would take, so we assumed a two-hour slot for each participant. We thought that each user would play around an hour, so rounding it to two hours would give us plenty of time to get everything ready for the next playtester.

As you will found out later, we were clearly wrong.

Another cool thing we did was to add each playtester to our calendar. This would help us a lot when we had to rearrange the timetable. We have a gmail account for the studio that we use only for this kind of things, we shared the calendar with our personal calendars and started adding events. Pretty handy and stupidly quick.

The only problem with this was that for some random reason the timezone configuration was broken, so all our appointments were off by an hour when viewing from our personal calendars, and that created some awkward overlapping.

The sessions

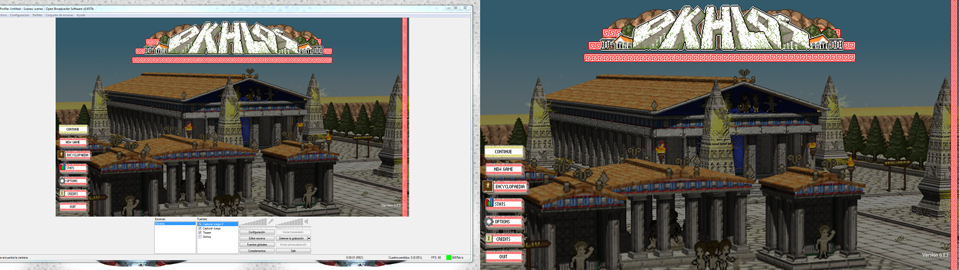

For starters, we set up OBS to capture the player's footage. Players would be sitting in front of the computer, and we would ask them how they usually played games at their homes, if they used a joystick or a keyboard and mouse configuration, and we would duplicate the screen to see the play session from another monitor. The videos recorded were at a very low resolution. Just enough to see what was going on. There is no need to have 20gb videos of hours of playtesting.

We also asked what language they usually play games in, and if they used headphones. We didn't want to impose anything, and we wanted players to feel like at home. We even threw some snacks to improve the experience. If you have to play an incomplete and broken game, at least have some free food.

A little side note, we were surprised the amount of people that play games in English in Argentina. Probably half of the users wanted to have the game in English. This might be due to past experiences dealing with shitty translations, and trying to have the real deal, the true experience of the game. For me at least, even if Okhlos supports like 8 languages, and this blog entry is in English, the game's main language is Spanish. There are some jokes that wouldn't simply work in any other language. And we literally did whatever we wanted in the Spanish version. 99% of the game is the same in all languages, and the game should detect your system settings for language.

We had planned each session to last about an hour. In reality, most sessions took about two hours. And sometimes more. This is very positive from a game design standpoint. People just wanted to keep playing the game, but from a schedule point of view it was a problem. We tried never to say "Well, that's enough, go home" but we had to a few times.

Because of the previous mentioned calendar bug, and the organic and always changing nature of people, we started to have people's time slots overlapping. When this happened we had resort to setting up another computer with the game running, where we didn't have the chance duplicate the screen.

Our friend (and often spellchecker) @pfque_ doing playtesting. Photo by @alejandropro

Our friend (and often spellchecker) @pfque_ doing playtesting. Photo by @alejandropro

When the playtester were playing, we tried not to talk to them to avoid distracting them. We also told them not to ask us questions. If there was something that they couldn't figure out, we would jump in only if it was absolutely necessary.

At the end of each session, we would ask the player how they felt the game, if it was too hard, too easy, what they loved and what they hated, and if there was something that they hadn't understood. Most of the questions were pretty redundant, because when you know your game you can get most of this stuff by simply watching them, but very interesting stuff came up from these five minute interviews.

In the middle of the week, we did some A/B testing with basic stuff (I will get on details about that later), and it was really useful. Because we knew that there would be no further playtesting after this, if there was something controversial that we wanted to test, at the beginning of each day we would discuss it and make a new version.

Saturday was chaos, with probably six or seven people at any time in our offices. They atmosphere was really relaxed and cool, but we couldn't pay so much attention to each individual session like we did during the rest of the week.

The videos were incredible useful. When the week was over we started discussing what we saw and what we should change. Having the videos was a very quick reference to see what we were discussing. And my memory is pretty visual, so seeing the same thing might trigger what you were thinking when you saw the live performance.

If I have to draw a conclusion, is that doing playtesting is really important, is incredibly useful and even when you don't want to burn off all your friends too often, you have to do it at least two or three times for project (obviously, this depends on the scope of the project).

It's also a huge morale boost. We were very tired for the long and uninterrupted development cycle, and a little worried about Okhlos being too easy/hard/bad, but when we finished the sessions, the results were so good that it really injected us with the energies to keep working as hard as we were before. From a psychological point of view, it sounds very healthy for medium size projects. As long as you know very consciously not to go trigger happy with the changes.

That was pretty much our playtesting week, which was incredibly tiresome, but amazingly fruitful. If you came here to read only about Playtesting, good job! You are done here. Below, I will start talking about Okhlos particular results.

Okhlos Results

The response was overwhelmingly positive. Most of the people that came for the platytesting were even not acquaintances of us, who had found out about the playtesting through someone that shared our original post (which was not exactly what we planned, but we enjoyed the enthusiasm).

This made the tester brutally honest, but in fact, nobody said that they didn't enjoy the game. However, we have the idea that one of the testers really didn't like the game, he played a little less than an hour and didn't want to keep playing. But statistically, it's still a pretty cool outcome.

As we said, we thought that the game might have been be too hard. While balancing, tweaking the difficulty curve and through other changes, we think we found a sweet spot. Some players still found it kinda easy, so we are increasing the difficulty in some areas.

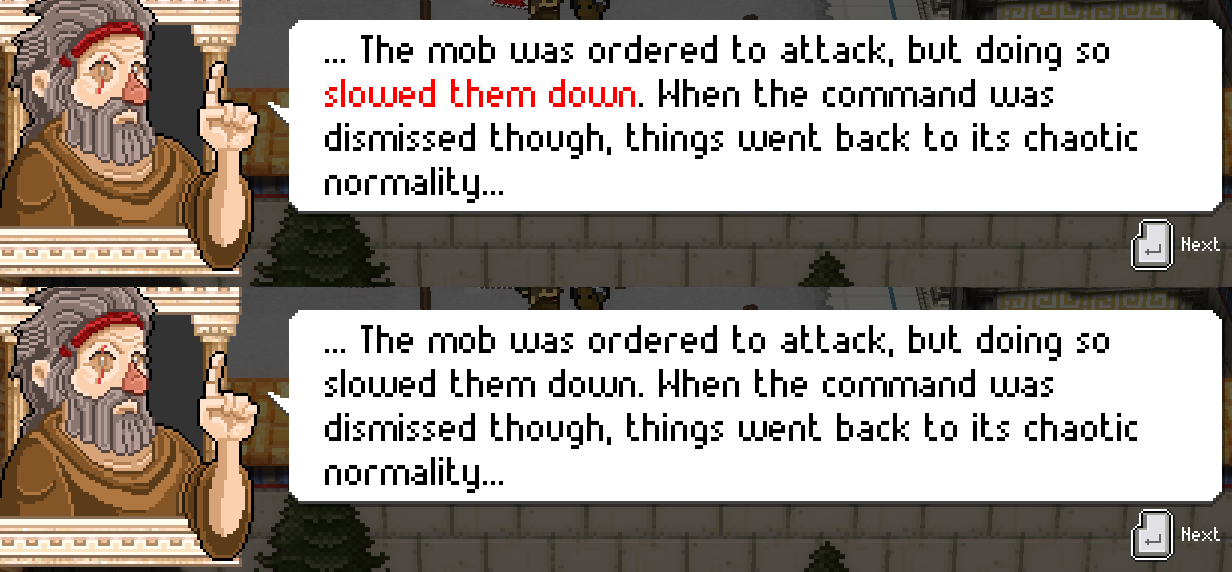

Some players pointed out something very interesting. They didn't get enough feedback from the attack. When you press Defense, you immediately know that your mob is defending. They all stay still and defend. When you run or collapse the mob, you don't have to focus your attention in any particular element, you simply see the mob and how it behaves even with your peripheral vision. When you are attacking, though, there is not much change visually. Yes, the mob moves slower, but not so much to be noticeable. The first tester who pointed this was our friend Juan from Heavy Boat.

This was one of the first changes we did during playtesting. We used the sprint sound in the attack, permanently. It was rough, but tremendously effective. Gord almost kill us for that. Players realized much more quick when they were attacking.

Besides this, we had another related issue with attack. Players that used Joystick where more inclined to understand to hold the attack trigger (maybe because it's a trigger), but players that used the mouse, would constantly smash the mouse button. That was something that we knew sometimes happened, but we were not sure how to approach it.

We did small animations in the tutorial boards, just to clearly state that you should hold the mouse button down. But maybe more interestingly, we decided that if players really want to smash their mouses, well, we should just let them! So Seba added a small delay when pressing the mouse, so if you let the mouse button off for a second, the mob will continue to attack. This helps in two ways, for starters, players realize that you can maintain the button pressed, but even if they keep doing it, there are more chances that the mob will attack. Before this, if you didn't hold down the button, there were big chances that the mob wouldn't start to attack, because they have to get near the target and there is some anticipation in the attack animation. So now it feels much better, and its still pretty responsive if you want to stop attacking immediately.

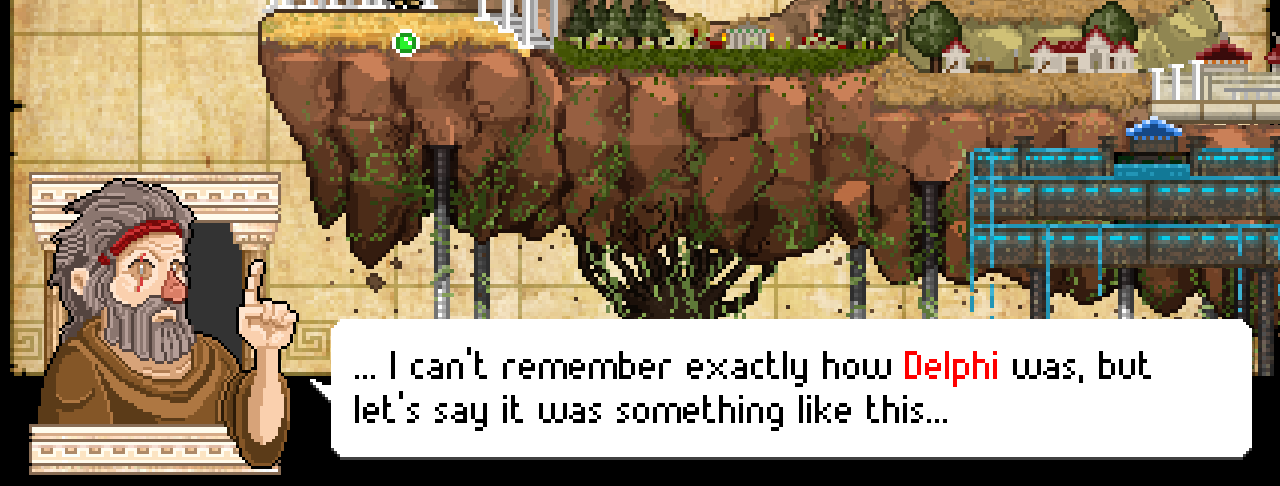

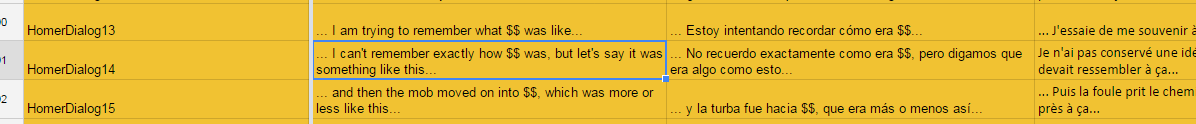

A small narrative change we did was regarding the procedural phrases Homer tells you each time the mob moves to a new city. They were generic and interchangeable. But @DavTMar suggested that in order to help the narrative, we should address specifically which city the mob was going. So we added the name to the city to the phrase (and we tweaked them a little), and now we maintain these procedurally generated, phrases but having the name of the city makes them more custom, and clear to the user.

There were tons of other stuff. We changed the items in the hud and we placed them directly on the mob, which is really cool and organic, and having less UI feels very good. We added tutorial texts, we revisited them, we scaled up buttons, we got ourselves very busy for the week following the playtesting. And that was pretty rad. We were able to address most of the changes in less than a week, which was very cool as well, as we weren't hoping to finish so quickly with all the changes.

So, there you have it! Playtesting often is healthy, cool, incredibly useful and cheap. You don't have many excuses for not doing it!

We wanted to close the entry with a HUGE THANKS to all the people who made the time to pass by our offices and helped making Okhlos better!

Drinking game: If you finished reading the article, then read it again but every time I said the word "playtesting" take a shot.

I can relate to this post... As a novice dev, the importance of playtesting is immeasurable... Great thread @Coffee Powered Machine!!!

Thanks!! It is! Play often, change often. (At least in early development)

i didn't even knew the difference between functional testing and play testing, nice article!

Thanks!

Really informative article! Thanks for putting it up!

-Tim

Thanks for the comment!

Very good article, I learned a thing or two!

Glad to hear that!