More shader work and Constraint Visualization

On the Graphic side more shaders have been converted to proper GLSL 1.3 . Only a few shaders are missing and then the conversion process is done for. Furthermore a new render mode has been added inspired by UT3, the half-render mode. If this mode is enabled the game is rendered to a render target of half the screen size. During the finalization processing the rendered image is upscaled to the real screen size. This helps weaker machines as rendering takes place on only 1/4 of the screen size. The quality is not as good as rendering to the full screen from the beginning but this allows to improve render speed on machines where otherwise the render speed would be too low to be acceptable.

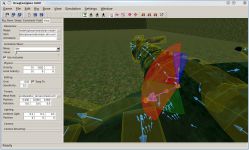

On behalf of the Rig Editor constraint visualization has been added. This had been missing since quite some time and is a rather important tool to judge how the outcome of a constraint attempt is going to be. Currently linear and angular limits are shown as in the image below. Furthermore rig constraints obtained an own class different from the collider constraints which helps with Rig Resources in the long run.

Ragdolls and Spring-Wings

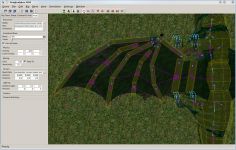

So far the Drag[en]gine had conventional rigid body physics using rigid constraints. For rigid bodies this works to some extend but dynamic objects like dragon wings for example cause troubles and can not be done well. Now the engine supports spring parameters on all constraints. Using springs the retention forces on a dynamic object like wings can be simulated to some degree. The wing web on a spread ( or unfolded ) wing is under tension. This is one of the important properties to enabled flight. Without muscle force applied the wing web tends to pull together to a non-tension state ( physical principle of the least energy ). To achieve this behavior the wings have been constrainted using a combination of rigid and spring constraints. This is only the first version but it can be improved upon. The image below shows the setup of the dragon wing. The constraints ( in purple ) along the wing finger and the wing web are rigid constraints. The constraints perpendicular to them are the spring constraints.

Some earlier testing videos can be found on here: Dragon Rag-Doll Test 2 ( with spring-wings ). Furthermore below a video where everything has been put together: Dragon Locomotion, Ragdoll Physics and Spring-Wing physics ( also on youtube: In-Game Dragon Locomotion Testing). The first sequence shows the dragon locomotion with body tilting and feet-on-ground animator test. One of the strength of the Drag[en]gine is the Animator system which allows very sophisticated animations with ease. Especially multiple animators can be used. In this example two animators are used both loaded from files so you can mod them easily.

The first one does the animation of the actor. The second does the aligning of the feet with the ground. One of the advantages of this system is that the second animator is optional. Should speed become an issue you can simply not use it. Animations become this way pluggable/moddable in a way you can't do it with other game engines. The second sequence shows the same as the first one but from the eye-camera. Here it can be seen well that using the dragon locomotion with body tilting your view stays exactly where you want it resulting in a smooth camera motion. The only issue right now is with the chase camera. It faces always where the actor is looking at but due to the up and down this can be a bit cumbersome. The camera class will be modified to counter this effect later on.

The third sequence shows the ragdoll ( or how I call it a dragdoll ) with spring-wing in action on a slopy mountain side. Still some issues with the Bullet Physics library and collision objects falling through the ground. Erwin wants to add CCD ( Continuous Collision Detection ) back into Bullet at some time but this is still future music so far [ I have to come up with a hack to counter this problem until this time comes. That although is not a concern for game devers using this engine but my problem... *gasp* ]. The fourth sequence is again the same as the third one but using the eye-camera. Important to note for those not knowing about "Dragon View Mode" yet. This simulates how a dragon sees the world using two views one for each eye. Instead of 90 degrees as with common game characters you see 180 degrees. Hence you can manage to see your body rotating your head sidewards strong enough.

Game Logic System

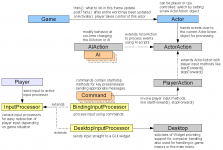

Now comes something a bit more technical but very important. A game with graphics and physics is nice but without game mechanics it's useless. Many projects forget about this until the very end and then it ends in a coder nightmare. The Drag[en]gine on the other hand has been designed from the very beginning with a sophisticated and flexible game logic system in mind. Recently I collapsed some classes together to improve the system but first an image and then some explanations.

This looks now a bit menacing but it's rather simple and nifty. On the right side there is the Actor class. It's one of the element classes in the game. Actors as the name state describes any kind of object which acts on their own or using the help of a player. Each element class has some per-frame-update methods called by the game as depicted by the arrow. Elements are made to "think" which tells them to act in one way or the other. Once done "postThink" is called once the entire world has thought about their next move. This is for example is the place where the feet-on-ground animator mentioned above is used. So far this is the way conventional games handle the processing. This is though also not very flexible. Now enter the Drag[en]gine.

Each Actor has one ActorAction object assigned. The Actor forwards the frame-update messages to the action object which in turn handles the acting for the hosting Actor. This allows to add specialized subclasses as in the example the AIAction and the PlayerAction which both are subclasses of the ActorAction. The AIAction object uses AI scripts and objects to figure out what to do. The nice part on this system is that you can modify the AI objects or even assigning a different AIAction object altogether to change AI behavior on the fly. AI becomes now pluggable/moddable like a LEGO system which allows to create complex AIs ( more about that in the upcoming Epsylon news post about the Knowledge System ). On the other side there is the PlayerAction object which takes input from a player and acts accordingly. Here again complex interactions with the game world can be broken down into small units. For example in the Epsylon game handling different objects is represented with individual subclasses: SDEmptyHand, SDThunderpad and others. Changing the behavior of the player is as simple as switching the PlayerAction object. You can now work precisely on specific player interactions without disturbing other interactions. Furthermore you can subclass player interactions to specialize and improve them. For example pushing buttons is done using SDInteractiveButtonPanel which provides the interaction. Two subclasses ( SDKeyPad, SDElevatorPanel ) provide then the specialized behavior for using a security key pad or an elevator button panel. The result is more robust scripts code which is also faster to write and simpler to maintain.

Now how to get the input to the player? Hard code it? No, this would be bad but unfortunately often done in games. The Drag[en]gine places there another nifty layer in between, the input processors. When a player issues an input like pressing a key on the keyboard an input event is generated which travels to the game scripts. There the event is handled by the current InputProcessor object. Again this allows to simply switch input actions without a lot of coding work. The BindingInputProcessor for example takes the input and matches it against a list of Command objects. Commands define a key the respond to and specify actions for pressing and releasing the key. For example the MoveForward command would cause the player to start moving forward if a button is pressed or stops when released. It's the command which actually interacts with the PlayerAction object currently set to the active player Actor object. This separates the input processing from the player interaction again improving robustness and maintainability.

As a second input processor exists the DesktopInputProcessor. This one is used to handle computers in the game. It simply takes the input and forwards it as it is to a Desktop object. Desktop objects are subclasses of the Widget class and are GUI components. Computers are nothing but GUI components with a Desktop as their root. The output is simple rendered to a texture instead of the screen. In-Game menus or all other kinds of game menus are also handled using a DesktopInputProcessor as they are nothing but a Window object located on a Desktop ( one desktop used for all in-game menus and so forth ). Switching between handling a player, handling a computer, handling the menu or anything else is just a matter of switching the current InputProcessor object.

This had been now a bit much of text but hopefully it gives an idea of how the Drag[en]gine Game Logic System works and why it is something to look forward to.

To Vote or not to Vote?

If you want to show your support and interest in this game engine as well as the attached game project you have to go to the Epsylon project page to place your vote since you can not vote for a game engine. The Epsylon project receives scarcely news posts and media updates since nearly all of them go to the game engine project page. It is always a bit of a problem where to place the news posts or media since the two projects are entangled.

Outlook

Currently work goes on with the Knowledg System of the Epsylon project. It's something very exciting so I don't want to waste too much word about it right now. So please be patient until more will be reveiled.

![Drag[en]gine](https://media.moddb.com/cache/images/engines/1/1/9/crop_120x90/banner_large.png)

Nice work, the Dragon View Mode is a nice idea but it may take a bit of getting used to.

This is true. Therefore the old view mode with a single view and a dragon snout in the middle can be enabled if required. This restricts the player back to 90 degree field of view but allows to play with a familiar setup.

Ah, its good to have options. But I think I will put the time into readepting, it will be worth it in the long run I feel.

I really like the idea of the new view mode, I would very much like trying that out to see if it would actually work. I don't like how the feet slide when you rotate the dragon.

I'm looking forward to more of this!

Yeah the feet while turning is a problem. There are two possible solutions which though both are not yet to my liking. First one is to limit rotation speed to a slow turning in which case an animation can be made which matches. This interferes though with the playing experience. The other would be to make the IKs stick to the ground instead of being down-projected. This though can cause twisted legs if you turn fast. It's a problem though which does not affect the game engine only the game code so I postpone that problem a bit for later.

Great work! :)

Good work as always DL

For some reason the game logic image failed to show up. Fixed it now.