For AI in the beta version, we’ve made many improvements from the demo. Everything is new, everything is better and you’ll notice the result when playing the game. To do this, I’ve combined the two common approaches to AI in doing this - a finite state machine (FSM) and behaviour trees. I was explaining the system to Alex today and he suggested I write up a post on it.

In short, a finite state machine models well, a finite set of possible states for a system. You can think of it as a flowchart, where certain inputs result in certain predictable inputs. This is ideal for very simple AIs as it provides a pretty easy-to-understand system around which to base behaviour. The AI in the SVSH demo was a pretty simple FSM, and it worked well in the situations for which it was programmed. Others though, not so much.

This is because FSMs are limited. They can model only a finite set of states, so the amount of outcomes is limited by the amount of inputs you are willing to deal with. Additionally, they are notoriously hard to expand and somewhat prone to confusing spaghetti decision trees. This was very much the case in the SVSH demo, where I wanted at one point to add “force an attack on this player” functionality. This was tons of work! I didn’t want to repeat that.

For the full version of SVSH, I wanted something better. I decided to continue to use FSMs for low-level tasks, such as AI pathfinding and aiming. These are relatively simple, and can often be broken into component parts quite effectively - for example, if an object is in front of me, turn and fire engines.

I expanded our basic FSM implementation for navigation into what is called a fuzzy state machine (FuSM). This system builds on the FSM concept, but allows for decisions that are not binary (ie “target is to the left” and “target is to the right”) and instead driven by weights. In this way we can have more complex low level decisions.A good example of this is basic object dodging. Instead of “if obstacle in front, turn left and fire engines”, I use a number of additional predictors, such as final goal location, speed and thrust to weight ratio of the ship to determine which way to turn. By using a FuSM in this way, I can get more realistic and less predictable behaviour from our ships. By tuning these thresholds, we can also vary behaviour between ships, for example to create a Hard versus an Easy AI.

This FuSM business is all fine and good for low-level tasks, but for actual decision-making, we often need a more robust solution. No matter how fancy we make the FSM, we can never handle all the possible situations that an AI player might encounter. To solve this problem, we use a more advanced AI concept called behaviour trees.

Behaviour trees are ways of organizing AI behaviour into a hierarchy of nodes and sub-nodes, each of which represents some action an AI can take. They are powerful, versatile and frequently used nowadays in game development. The concept is quite… large, and if interested, I suggest this article written by Bjoern Knafla. It’s the best overview I’ve found, though quite technical.

I use a basic implementation of behaviour trees in SVSH, custom written by myself mostly as a learning exercise. We use this to allow the AI to decide what generally to do. This includes choosing targets, choosing paths and attacking targets. All of these actions are complex, with many possible states, so a behaviour tree is a much better choice than a FSM.Once the tree has decided what to do, it often passes off the job to a smaller FSM. For example, once it has chosen a target and a route, it passes off actual obstacle avoidance to the navigation module, which reduces complexity somewhat. Target prediction and aiming is also handled in this way.

The SVSH AI can also switch between sub-trees which govern specific behaviour. I have, for example, three primary trees at the moment - an Aggressive, Defensive and Standard tree. They define a certain set of actions to take (or ignore) in order to behave correctly. These can be swapped out dynamically on the fly making ordering an AI around much more dynamic and simple.The most obvious benefit to this rewrite is, of course, better AI. We also have far more control over AI difficulty, with particular behaviour trees for our six difficulty levels. The coolest by far though is the ability to order your AI buddies around.

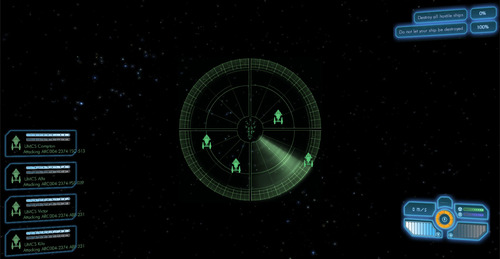

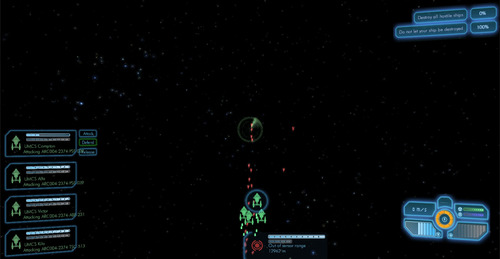

In the demo, some of you may remember the maps screen, which gave you a very zoomed out view of the battlefield. This screen has been overhauled into the Fleet screen, which shows your allies and also lets you give them specific orders. Currently, you can tell ships to attack singlemindedly, defend you from attack, or go about their own business (there are also hotkeys for fleet commands as well).

This adds a totally new dynamic to battles, where you can concentrate your fire, order ships to attack particular targets, or get some help during a close fight.

That’s all for today, folks!

Then it is finally possible to kill the dreagnought :D