Greetings, dear reader. My name's Riku. I'm one of the programmers in the team, and I'll be your tour guide to the world of Interplanetary development today. We decided to try something different this time and take a little more in-depth look in the technicalities of the development process. Programmers to others ratio in the team is so skewed anyways, it's about time we take some load off the poor bastards' overworked backs and represent ourselves to the public!

As you may know, we are making Interplanetary with Unity, and started the development by creating a working prototype of the game. One function for creating the prototype was to get ourselves familiar with Unity development, as we hadn't really used it much in the past. We indeed came across several quirks that we're glad to be aware of when working on the next build of the game. One of those quirks was the graphical user interface framework Unity offers out of the box.

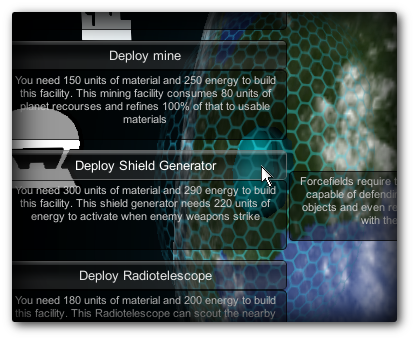

Our first builds of the prototype had a bug where the UI didn't take into account elements placed on top of each other. Clicking button in the GUI made everything behind the button to be clicked as well.

That's not working as intended at all! This behaviour encompassed the 3D scene used for things like placing buildings on a planet. This caused bugs where, for instance, selecting a new building from a menu also immediately placed the newly selected building on the spot behind the menu item. We fixed the issue in the prototype with a wide selection of conditional statements scattered around the code, but that wasn't really good enough for us. It was a pain in the rear to maintain, and we figured something should be done about it.

Fortunately we've had the privilege to have a programmer much more experienced than us available at hand to consult us. Jukka Jylänki from LudoCraft is no newcomer to Unity development, and offered to walk us through a solution to our UI problems.

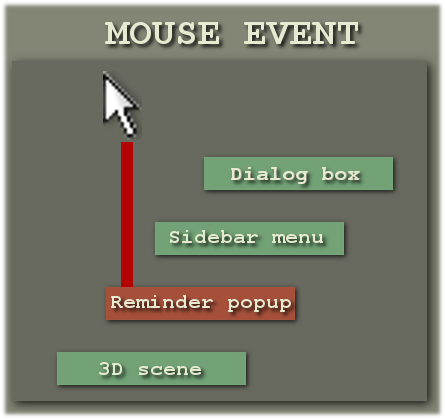

In Unity, each script attached to a game object gets the chance to implement several methods the Unity engine then automatically calls at suitable times. One of those methods is OnGUI(), that gets called for each GUI event detected by the engine. GUI events include things like mouse buttons being pressed or a GUI element being redrawn. For each such an event, the OnGUI() method of each script implementing it gets called. In theory this offers a convenient way for each thing that cares about these events to process them, for instance for GUI buttons to check if a mouse click event caused the said GUI button to be pressed. In theory. In practice, the system has problems such as there being no easy way to enforce and determine in which order the OnGUI() of each element gets called, which makes it a hairy endeavor to implement things like buttons on top of each other. With the default Unity behaviour it's not very convenient to find the topmost thing that cares about mouse clicks and ignore all elements behind it.

To solve this, more control over the OnGUI() calls is needed. This can be achieved by creating a single GUI master object, which is the only object in the game that implements the default Unity OnGUI() method. It holds a collection of custom-made GUI elements, sorted by depth. When a mouse button is clicked, iterating through the elements front to back should easily find the topmost GUI element that the mouse cursor rests on top of. If we don't hit any GUI buttons with the mouse, we just let the 3D scene handle the event and do things like placing a building in the clicked position, right? Right.

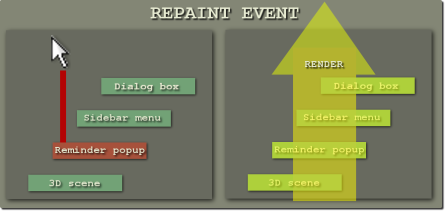

It just so happens that in addition to handling mouse clicks, we are also supposed to draw the elements during an OnGUI() call when a repaint event occurs. The elements need to be drawn in the opposite order, back to front, so they properly appear on top of each other. Because of this, each time OnGUI() gets called, we need to check what kind of event caused it and iterate through all the elements on a different order depending on that. But wait, there's more! When drawing the elements, we want to highlight the topmost GUI button the mouse is resting on. So when a repaint event occurs, we need to first travel through the stack of elements front to back, testing against the current mouse coordinates, until we hit a button. This is the closest element from top that the mouse rests on, we want to highlight that. So we take note of this element, and go back to beginning. We then start drawing the elements from the other side, back to front. Upon each element, we check if it is the one we marked for highlighting and tell it whether or not it should be highlighted.

This kind of solution does solve the issue, but such a basic problem really ought to have a more elegant answer. Due to issues like this, we've found the Unity's default GUI solution somewhat lacking, and are currently experimenting with third-party GUI libraries from the Unity Asset Store. They seem to do a lot of the heavy lifting for us when building the UI for our game, so we can direct our efforts elsewhere and spend less manpower maintaining and managing our own custom GUI code.

And here ends the story of Interplanetary GUI development. We've hopefully communicated something about what actually goes on in the day-to-day development of the game. This post is kind of an experiment for us, we have no idea how interested you readers are in this type of bit more technical content. Please let us know what you think, your response may very well dictate if the boss-man lets us programmers take the wheel again!

Hell, I don't even know what this game is, but the problem seems interesting enough for me to stay around.

Why not just do it the hard way and toggle a boolean when you open a menu, then when anything happens it checks which boolean furthest down is true.

Like, let's say you have two buttons on top of each other, when the one furthest back is made/drawn a boolean is toggled and when the other button is made another boolean is toggled, when you then press the button it checks "is the second boolean true", if so then just do what the second button's supposed to do and ignore the rest.

Personally I've never touched the unity engine but I assume you can still manhandle it, even if it's a slow and tedious process.

Might not be elegant either, but it works.

If I understand your suggestion correctly, it is a very similar solution. In the post above instead of booleans the system just makes the assumption that the container containing the GUI elements is sorted by depth. It can then iterate through the elements in order without having to compare booleans. Other than that, it works on a similar principle. When the topmost element that should handle an event is found, a simple break statement then ensures that all elements after that don't receive the event.

It also indeed is sort of manhandling the Unity engine, as it bypasses all the mechanisms Unity offers and requires a custom made system. In theory the engine does include features to incorporate GUI elements nicely. For instance, after an event has been handled, it can be marked as "used", so everything receiving that event afterwards knows to ignore it. In practice, that doesn't work quite as well as advertised, and is only one of the shortcomings we encountered when developing the GUI.

The point is, such manhandling shouldn't be required, and isn't if one is using some of the third party libraries available. As time is of the essence, something being slow, tedious and not elegant very much is a concern to us. We can then spend that effort elsewhere where outsourcing the solutions isn't as easily done.

Our intention isn't to blow the issue out of proprotions, though. This was just something simple I figured would suitable for testing the waters for people's interest in hearing about our adventures in Unityland. We're paying close attention to what kind of response this piece of content receives.